Elon Musk recently launched his new venture x.ai with its goal to “. . . understand the true nature of the universe” and according to this tweet “. . . to understand reality”.

I think this is great and I have some opinions and suggestions about how this can work. But before that why should anyone listen/read something from me?

Why I am saying this and why x.ai - or anyone - should read this?

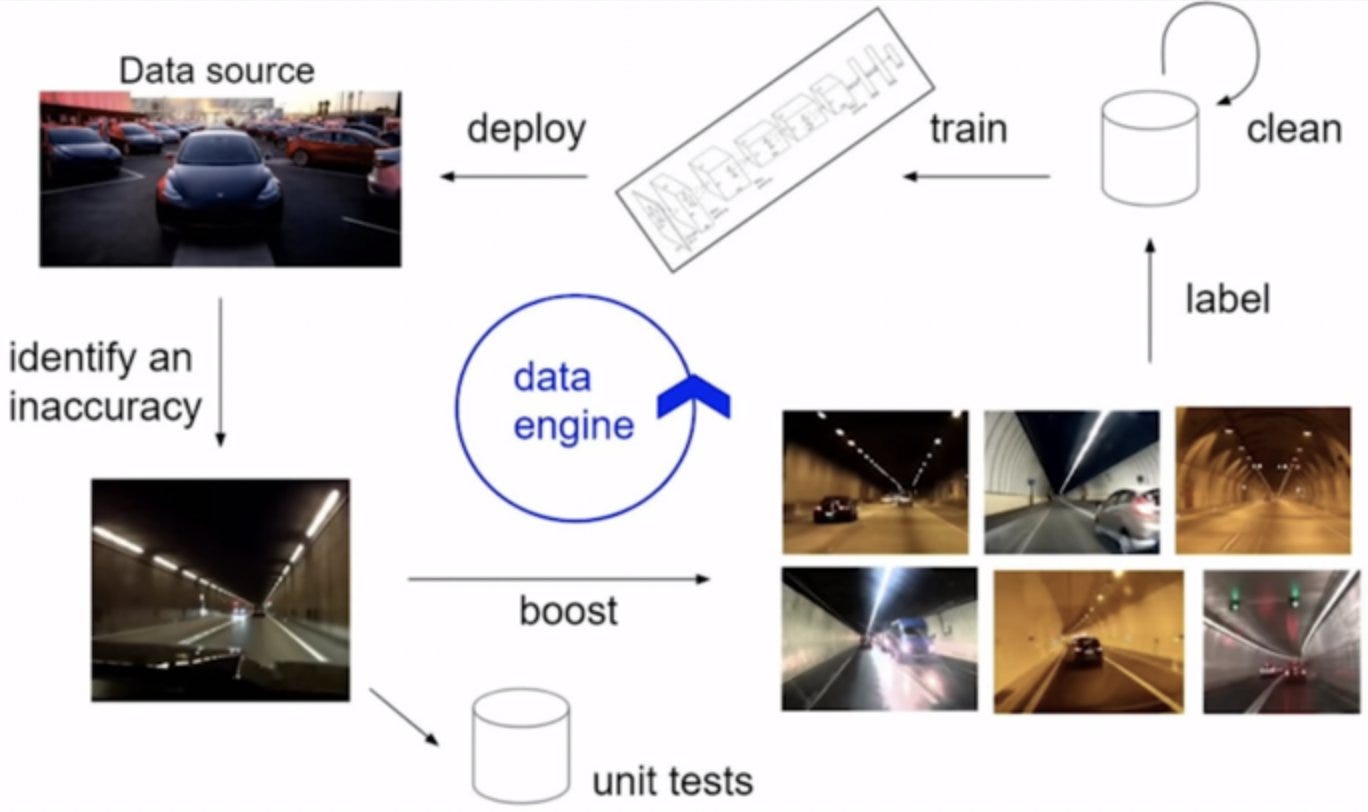

I have spent my career building machine learning and deep learning models, and building the infrastructure around these models. I began building and experimenting with different deep learning models around 2012-14 when the first successful deep network (AlexNet) was popularized. I was at Qualcomm Research in San Diego, and I was helping build the infrastructure to run these models on Qualcomm processors. In the process, I also contributed (1, 2, 3) to the original Alexnet GitHub repository as this was the first GPU-enabled codebase in the world that ran deep learning models fast. Currently, I am part of a startup that provides value to our customers by developing deep learning models very similar to Tesla. I have learned a lot from the publically available talks and technology day discussions about how the data engine works. this is an incredibly powerful technology and requires many components to work together well to have the final AI system perform the tasks we want it to do.

In addition to the advances made by Tesla and other companies in streamlining the deep learning data pipelines, there is another advance that happened in 2020. That was the advent of Large Language Models (LLMs). These are language models trained on large swaths of internet data, and with good user interface like ChatGPT, they respond to our queries like we are talking with our friends, it does simple math calculations, teaches us physics concepts, it answers our queries about how to install a specif software. A variant of these models also allows us to generate awesome imagery. All this progress creates an impression of the technology as if we are really close to creating general intelligence. Unfortunately, that is just an illusion. Using existing data and hoping to generate any new knowledge is the wrong approach. X.ai wants to uncover the true nature of the universe and understand reality. We are not going to find answers to these questions in existing internet data. These are profound questions x.ai is venturing to answer. Humans have been pondering these questions for centuries. No satisfactory answers exist so far, therefore there is no data on the internet that exists that answers these questions. Simply creating probability distributions of the most likely sequence of words would only give the appearance of coherence.

This is where we need to take a step back and think about the gap between what we want to achieve and the approach we are going to take to achieve it. This is where x.ai has a decision to make, are they going to fall into the traps of consumer-driven demands of delivering value quickly that create a buzz or are they going to go to the first principles to take a different approach?

What can x.ai do differently?

Real knowledge creation (like the sort x.ai purportedly wants the ability to generate) does not begin with data. It begins with a problem. This view goes in stark contrast to any existing DL/ML techniques out there. An ML engineer is given a dataset and is asked to detect patterns in the dataset. This ask is all well and good if all we want to build is a tool that helps us in some specific way. Tesla’s autopilot is a great example of such a tool. Creating knowledge is an entirely different ball game. Real knowledge creation begins with stating a problem and taking a guess towards forming a theory for solving the said problem. As Physicist Richard Feynman once remarked in one of his lectures while teaching “How do we look for a new law?” -

“. . . in general we look for a new law by following this process, first we guess it, then we compute the consequeces of the guess and see if this is right . . .”

Richard Feynman - Seeking new Laws

This is how learning anything new works, not just finding new laws. One guesses based on one’s current understanding of things, the best theory to solve the problem, and then one tests this hypothesis. If the test passes one holds this hypothesis as a tentative working theory to solve a problem. The real question now is how do we go about building a machine to follow this process to uncover new laws?

Physicist and philosopher David Deutsch has written extensively about the philosophical aspect of the difference between traditional AI and the potential future AGI.

“I am convinced that the whole problem of developing AGIs is a matter of philosophy, not computer science or neurophysiology, and that the philosophical progress that is essential to their future integration is also a prerequisite for developing them in the first place” - David Deutsch - How close are we to creating artificial intelligence?

So much for the philosophical challenges, there are obviously enormous engineering challenges ahead of us as well.

All existing technologies built and used by humanity are tools to enhance their abilities to do things more efficiently, with less friction. AGI is opposite to any such existing tool - It will not have a direct application as a tool humanity can use immediately. AGI will have a mind of its own and it will have an inclination to work with humanity, in which case AGI will be a partner of humanity and not a tool.

What are some challenges in building such an entity? - For starters, humanity has never built something before that doesn’t directly help them solve a problem immediately. Therefore the usual way of driving a company to provide value to their customers quickly and bringing in revenue won’t work. I have written more about this. The challenge of building an AGI is going to be like raising a child, with the right course correction as needed while it (the AGI) is learning the tricks of navigating the world and learning to learn. This will come after we have an instantiation of an AGI which in itself would not have any specific goal designed by humans. As David Deutsch has said:

“The moral component, the cultural component, the element of free will—all make the task of creating an AGI fundamentally different from any other programming task. It’s much more akin to raising a child. Unlike all present-day computer programs, an AGI has no specifiable functionality—no fixed, testable criterion for what shall be a successful output for a given input. Having its decisions dominated by a stream of externally imposed rewards and punishments would be poison to such a program, as it is to creative thought in humans.”

- David Deutsch in Possible Minds

Furthermore, he says, defining any testable objective (like it is done in the case of traditional machine learning model building) is going to be futile. This is true, however, there needs to be some objective that the program should be able to keep at the forefront.

Before getting into some of these functions, first, imagine a laptop that is running a low-footprint operating system like headless Linux with very minimum overhead. It does not have any input or is not generating any output at the moment. In the background, there are processes running (daemon - as they are called in CS parlance) that are keeping a watch on any potential input i.e. a key strike or a mouse movement. It also runs daemons that keep eyes on aspects that are relevant to the “well-being” of the system, usually called watchdogs.

Now imagine an AGI program that replaces this operating system. No modules are programmed in by humans in this operating system. The OS is primed with the capability of basic functionalities as discussed below. It has a module that takes in information about the world around it via sensors and has actuators (motors and pulleys etc). There is no specialized software or a neural network model running on this AGI OS. Instead, there is a module that is able to make goals for itself given what is already stored (i.e. accumulated knowledge) in AGI’s hard disk.

I give the following list of minimal functions the AGI program should be able to do. If you notice, this list has commonalities with newborns in that they have these abilities from birth. I suspect it will be paramount that AGI when it is born should be able to do these things.

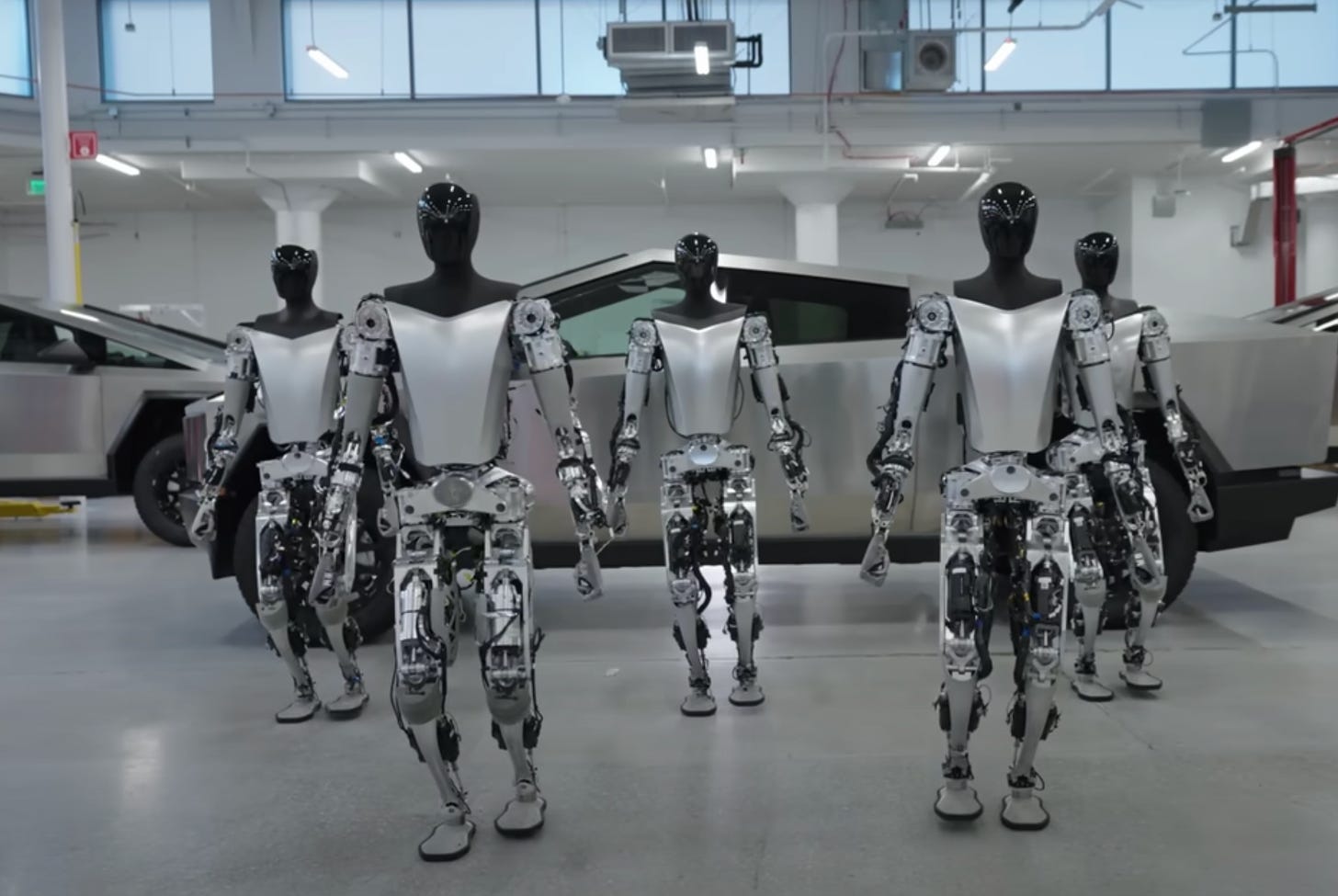

Embodiment

The AGI needs to be able to reach out of its world of bits and sense the world of atoms around it. It also needs to be able to physically act upon it. This gives the AGI the autonomy it needs without which it would have to depend on other general intelligence entities such as other humans. As soon as AGI depends on other entities, there is a risk that its goals and the other entity’s goals do not align, and that is not good. With Tesla’s Optimus program, Elon and x.ai are in a really good spot. In his 2012 blog post about the state of AI and computer vision, researcher Andrej Karpathy has this to say about embodiment:

“Thinking about the complexity and scale of the problem further, a seemingly inescapable conclusion for me is that we may also need embodiment, and that the only way to build computers that can interpret scenes like we do is to allow them to get exposed to all the years of (structured, temporally coherent) experience we have, ability to interact with the world, and some magical active learning/inference architecture that I can barely even imagine when I think backwards about what it should be capable of.”

-Andrej karpathy (The state of Computer Vision and AI - we are really really far away)Capacity to learn languages

Language is indispensable in this context, as with it the AGI will be able to communicate with other intelligence agents. It also needs to have the ability not only to learn the current language i.e. English but more so that it should be able to learn language from scratch (like how a baby learns language). It also should be able to wield language such that if it invents a revolutionarily new concept - such as the answer to questions x.ai is setting forth for it to solve - it should be able to communicate its new ideas to other intelligent agents. For example, before Einstein invented the theory of relativity, the word relativity had no connotations about what his theory later said. He (presumably) came up with the language of relativity to mean something very specific that is explained in his papers on relativity. The AGI agent should be able to wield the language in this manner.Capacity to be curious

Human beings are curious by birth. They begin learning new things as infants because they are curious. Everything that comes after that, all the learnings, all the knowledge and skill gathering, is based on this innate ability of human beings to be curious. Musk hinted at this capacity in his Twitter spaces answer like this:

“. . . the safest way is to build an AGI that is ‘maximum curious’ and ‘truth curious,’ and to try and minimize the error between what you think is true and what is actually true. . .”

- Elon Musk on Twitter Spaces

Although I am not so sure about why this would be the “safest” way. - I would like to ask him one day.

Capacity to want to survive

In the world of atoms and matter, the natural tendency is to move towards decay. Rocks and mountains decay, fallen trees decay, and dead animals decay. Everything that wants to keep its shape and function needs to act on the natural forces acting upon it to keep it so. This requires energy and requires know-how. This know-how is learned by living entities by either gathering knowledge (like humans do) or by using knowledge embedded in them in the way of DNA (like plants and other non-human animals do). The AGI agent we are building needs to have an innate capacity to accrue knowledge that helps it survive and fend off nature and other entities. In his short book “What is Life?”, the physicist Erwin Schrodinger says this:

“What is the characteristic feature of life? When is a piece of matter said to be alive? When it goes on 'doing something', moving, exchanging material with its environment, and so forth, and that for a much longer period than we would expect an inanimate piece of matter to 'keep going' under similar circumstances.”

- Erwin Schrodinger

All the big tech companies are building AI solutions that deliver value to their investors. x.ai could be different, as Elon mentions, “… as x.ai is not a publically traded company, it has more freedom in its approach.”. This is his opportunity to do something different and attempt to build AGI. It could be the beginning of something that humanity can be thankful for, for millennia to come.